In a groundbreaking study, researchers at KAIST (Korea Advanced Institute of Science and Technology) have used artificial intelligence (AI) to unravel the mystery of how musical instincts emerge in the human brain without any special training or learning.

Led by Professor Hawoong Jung from the Department of Physics, the team utilized an artificial neural network model to investigate the principle by which musical instincts develop in the brain. The study, titled “Spontaneous emergence of rudimentary music detectors in deep neural networks,” was published in Nature Communications.

Previous research has explored the universality of music and attempted to understand the similarities and differences across cultures. A 2019 paper published in Science demonstrated that music is present in all ethnographically distinct societies and that certain beat patterns and melodies are commonly used. Neuroscientists have also identified the auditory cortex as the part of the brain responsible for processing musical information.

In this study, the researchers used an artificial neural network model and trained it using AudioSet, a large collection of sound data provided by Google. The AI model was exposed to different sounds to learn the various sounds.

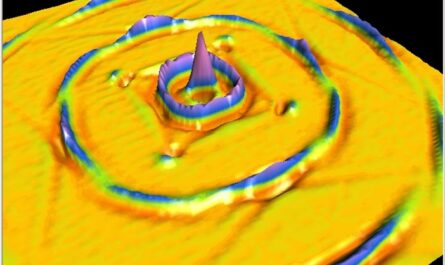

Excitingly, the team discovered that certain neurons within the AI model responded selectively to music. These spontaneously generated music-selective neurons exhibited minimal response to other sounds, such as animal noises or nature sounds, but showed a high level of response to different types of music, both instrumental and vocal.

The reactive behaviors of these artificial neurons mirrored those observed in the auditory cortex of a real brain. For example, the neurons responded less to music that was cropped and rearranged, indicating that they encode the temporal structure of music. This property was not limited to a specific genre but emerged across 25 different genres, including classical, pop, rock, jazz, and electronic.

Interestingly, when the activity of the music-selective neurons was suppressed, the cognitive accuracy for other natural sounds was significantly impaired. This suggests that the neural function responsible for processing music also aids in processing other sounds and that musical ability may be an instinct formed as an evolutionary adaptation to better process sounds from nature.

Professor Jung believes that these findings imply an evolutionary basis for processing musical information across different cultures. He envisions that the artificially built model with human-like musicality developed in this study could have various applications, including AI music generation, musical therapy, and research in musical cognition.

However, it is important to note that this research solely focuses on the foundation of processing musical information in early development and does not consider the developmental process that follows musical learning.

This study sheds light on the origins of musical instincts and provides valuable insights into how the brain spontaneously develops a cognitive function for music. By leveraging AI technology, researchers are getting closer to understanding the universal language of music and its broader implications in various fields.

*Note:

1. Source: Coherent Market Insights, Public sources, Desk research

2. We have leveraged AI tools to mine information and compile it